- Services

- Solutions

- Why Critical Start?

- Resources

- About

- Partner Programs

- Breach Response

- Contact an Expert

- Intelligence Hub

Building on the success of a next-generation firewall business, Palo Alto Networks is now leading across multiple competencies in the cybersecurity space including network security, cloud security and security operations. Palo Alto Networks has a unique ability to integrate new technology quickly to compete in new verticals, so for more insight on how they’re accomplishing this we talked with Tim Junio, SVP of Products, Cortex at Palo Alto Networks and former CEO of recent Palo Alto Networks’ acquisition Expanse.

Tim was conducting cyber operations for the CIA before he was old enough to drink, performed consulting work for DARPA and helped to build out cyber operational capabilities for the U.S. military. He explained how a once DARPA prototype became what is now known as attack surface management technology and how it’s now evolved into Palo Alto Networks’ Cortex Xpanse today.

“Going back to 2013-14, when we were first thinking about what ultimately became the core technology for Cortex Xpanse today, we observed that the Internet was kind of a mess,” Tim stated. “As soon as we started looking at a large scale for exploitable systems, we found a huge number. The premise for defenders back then was to try and do penetration testing and always be looking for weak links. But the idea that you can in an automated fashion find exposures as soon as they come up, like within minutes, was not a reality that defenders were prepared for.”

Tim compared this situation to what Palo Alto Networks’ Cortex Xpanse is accomplishing today. “Now it’s really taking an attacker’s view of the organization,” he shared. “It asks key questions such as: ‘What applications are exploitable? What systems are available? Are there any misconfigurations?’ It is the bane of security if you don’t really know what you’re protecting against; if you don’t really know what it looks like. So it made sense for Palo Alto Networks to make this acquisition.”

The right formula for security acquisitions

“After folding in multiple acquisitions over the years, Palo Alto Networks has gotten even better at this process. Before the acquisition of Expanse even closed, we were talking about where our technology could plug in, including the obvious fit with Palo Alto Networks’ next-generation firewalls to ensure that we’re actually protecting the entirety of an internet protocol space. But there are also some not-so-obvious areas including areas for co-development such as Prisma Cloud. We were able to work with this cloud security product to provide a combined view with Xpanse that can show the customer unmanaged cloud assets and find vulnerable systems within cloud environments so that they can be brought under proper management through the Prisma Cloud product.[1] ”

Tim went on to explain how Xpanse is helping Palo Alto Networks to build out data lakes that contain a wealth of security information. He described how they have been prototyping attack surface data to gain a better understanding of how mergers and acquisitions can alter the security situation that a business is facing. “When you add in the complexity of mergers and acquisitions, and business units operating globally, an organization may not really appreciate the vulnerability that it’s facing,” Tim shared. “We show up to customers all the time and remind them, ‘Hey, you’re doing this joint venture, did you know you’re using Alibaba’s cloud? You’re not just an Azure shop anymore.’ And they realize they weren’t centrally tracking that as an organization.”

How Palo Alto Networks defines XDR

Evolving from the early days of attack surface management technology, XDR brings next-level thinking to the entire concept of vulnerability and threat detection and mitigation. Who better to define what XDR means today than Palo Alto Networks, the company that first coined the term and the thinking behind

it. When Tim was asked about the essential criteria that should fall into the expectations for XDR, he replied that the most important idea is the evolution of endpoint detection and response. “Protection, prevention and detection requires joining endpoint data with other data,” he shared. “Basically, if you’re dependent on only one source of information at a time for security, you’re going to miss sophisticated attacks.”

Tim believes that combining endpoint data with network security data is an essential place to start. “If you look at the prior era of endpoint protection, that is where you started to have behavioral analysis and looking at things happening locally on a machine,” he said. “And that obviously was a huge leap in technology that was efficacious for a while , but then adversaries adapted and started doing a better job of obfuscation. We needed a new approach and joining endpoint data with network data gave us new kinds of visibility. If you’re looking across different data sets your odds dramatically improve that the attacker is unable to obfuscate across everything.”

Not simply more data

But Tim also clarified the importance of not just consuming data for its own sake. “I think that is the difference between XDR and SIEM,” he stated. “Security Incident and Event Management was supposed to be the answer to this problem of the modern SOC. But there are too many alerts and people are overwhelmed, so it doesn’t stop enough attacks. When we look at a data ingestion model, we need to ask if we’re providing anything useful or just aggregating. How much of that data is used in true correlation? While SIEM let’s you do that in a highly-manual, human-driven way where you need to do much of the data normalization yourself, XDR starts with the highest quality, most important security data where the intent is not to take 200 different data sources and run queries over them.”

“The difference in how an XDR product would work versus SIEM would be that XDR would normalize between datasets so that you actually know the relationships between them for the highest quality data and then you run advanced analytics on top of them,” Tim continued. “For XDR the data integration component is fundamental. For our own XDR we do the data integrations natively for the product. We create what we call a story. A story is basically the joined relationships between different data sets, starting with our endpoint agent from Cortex XDR and our next-generation firewalls. But we also bring in third-party data and we’re perfectly happy to work with competitor’s data, make that available within XDR and joined with either the next-generation firewall or our endpoint.”

Tim concluded by providing a glimpse of what this will all look like as part of the next evolution within Palo Alto Networks Cortex XDR 3.0 platform[2]. “If you’re a customer of XDR 3.0 and you’re connecting XDR endpoint data, plus let’s say data from Amazon Web Services plus data from our next generation firewalls, we’re going to be running our analytics over all of those datasets and we’ll provide you with scored alerts across the datasets within the XDR unified console,” he said. “I would add to that we’re also pushing results into workflows. Our XDR product is a playbook automation product where we can automate a wide range of responses and have hundreds of integrations built into that product. If we can’t automate it, we can at least augment the workflow automatically for human analysts and provide as much context as possible to speed up the time-to-respond. If you look at this holistically overall, I think that there are four pieces here: There’s the gathering of data, the integration of data, the analysis of data, and then the workflow. And I think what’s really hard is to get all of those things to work well together. And so where we’re really starting to excel is within that overall integration component.”

Source: https://www.paloaltonetworks.com/blog/prisma-cloud/manage-unmanaged-cloud-prisma-cloud-and-cortex-xpanse/

Source:https://www.paloaltonetworks.com/company/press/2021/palo-alto-networks-launches-cortex-xdr-for-cloud–xdr-3-0-expands-industry-leading-extended-detection-and-response-platform-to-cloud-and-identity-to-detect-and-stop-cyberattacks

-

Pulling the Unified Audit Log

During a Business Email Compromise (BEC) investigation, one of the most valuable logs is the Unified...![]()

Announcing the Latest Cyber Threat Intelligence Report: Unveiling the New FakeBat Variant

Critical Start announces the release of its latest Cyber Threat Intelligence Report, focusing on a f...Cyber Risk Registers, Risk Dashboards, and Risk Lifecycle Management for Improved Risk Reduction

Just one of the daunting tasks Chief Information Security Officers (CISOs) face is identifying, trac...![]()

Beyond SIEM: Elevate Your Threat Protection with a Seamless User Experience

Unraveling Cybersecurity Challenges In our recent webinar, Beyond SIEM: Elevating Threat Prote...![]()

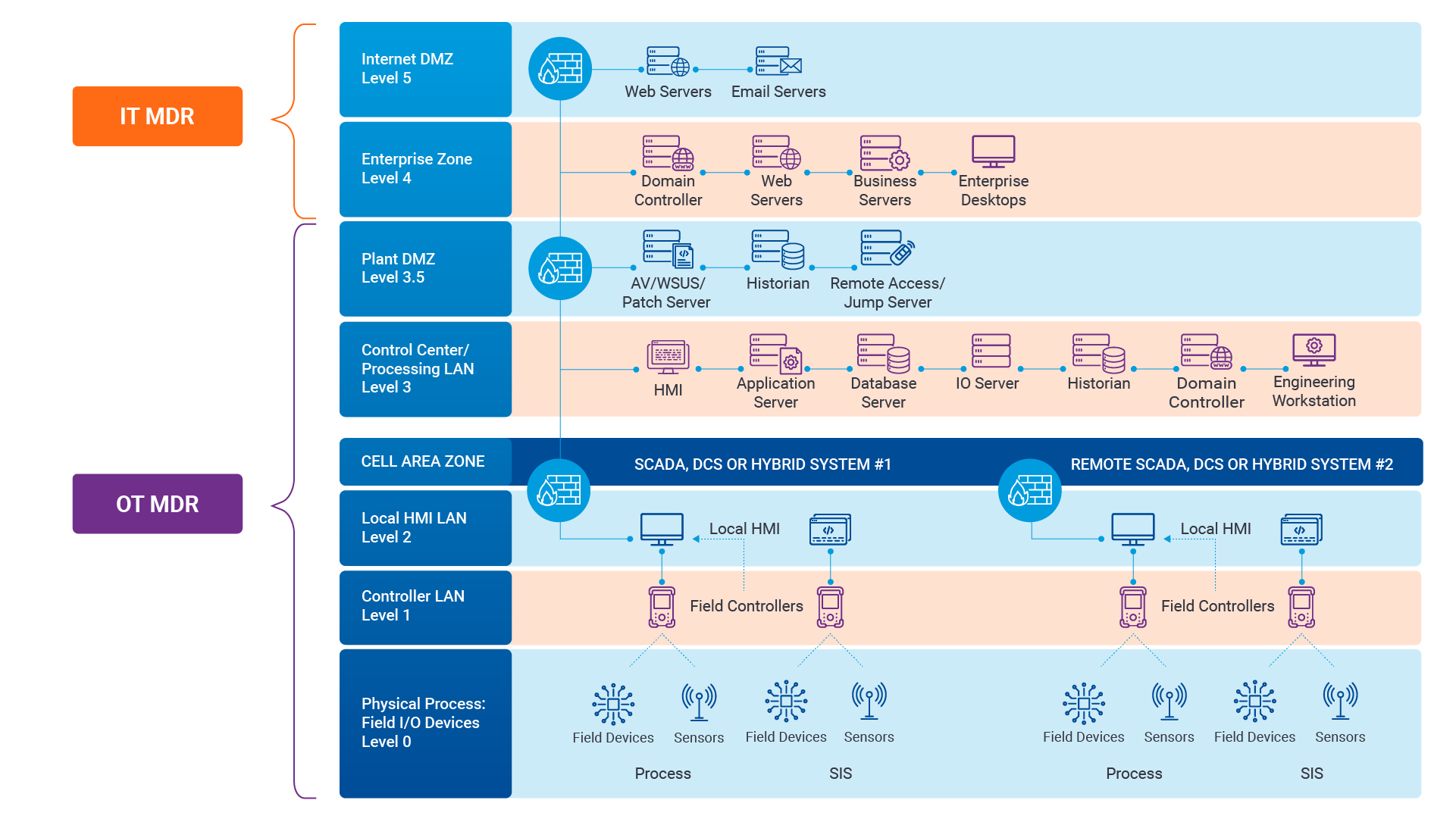

Navigating the Convergence of IT and OT Security to Monitor and Prevent Cyberattacks in Industrial Environments

The blog Mitigating Industry 4.0 Cyber Risks discussed how the continual digitization of the manufac...![]()

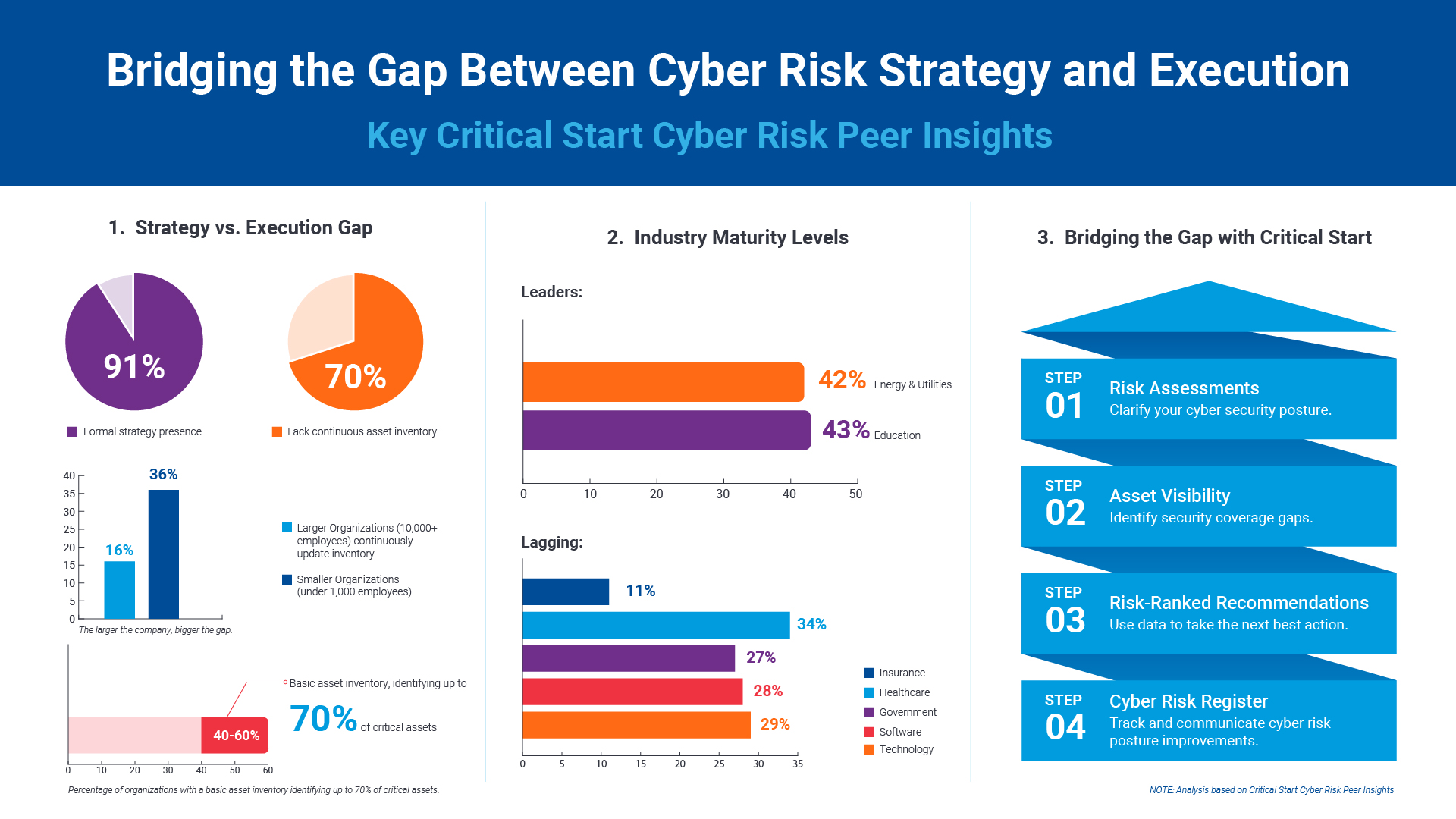

Critical Start Cyber Risk Peer Insights – Strategy vs. Execution

Effective cyber risk management is more crucial than ever for organizations across all industries. C...![]() Press Release

Press ReleaseCritical Start Named a Major Player in IDC MarketScape for Emerging Managed Detection and Response Services 2024

Critical Start is proud to be recognized as a Major Player in the IDC MarketScape: Worldwide Emergin...Introducing Free Quick Start Cyber Risk Assessments with Peer Benchmark Data

We asked industry leaders to name some of their biggest struggles around cyber risk, and they answer...Efficient Incident Response: Extracting and Analyzing Veeam .vbk Files for Forensic Analysis

Introduction Incident response requires a forensic analysis of available evidence from hosts and oth...![]()

Mitigating Industry 4.0 Cyber Risks

As the manufacturing industry progresses through the stages of the Fourth Industrial Revolution, fro...![]()

CISO Perspective with George Jones: Building a Resilient Vulnerability Management Program

In the evolving landscape of cybersecurity, the significance of vulnerability management cannot be o...![]()

Navigating the Cyber World: Understanding Risks, Vulnerabilities, and Threats

Cyber risks, cyber threats, and cyber vulnerabilities are closely related concepts, but each plays a...The Next Evolution in Cybersecurity — Combining Proactive and Reactive Controls for Superior Risk Management

Evolve Your Cybersecurity Program to a balanced approach that prioritizes both Reactive and Proactiv...![]()

CISO Perspective with George Jones: The Top 10 Metrics for Evaluating Asset Visibility Programs

Organizations face a multitude of threats ranging from sophisticated cyberattacks to regulatory comp...![]() Datasheet

DatasheetManaged Detection and Response Services

Human-Driven MDR Enhanced With Proactive Cybersecurity Intelligence Increase your security operation...![]() Video

VideoStop Drowning in Logs: How Tailored Log Management and Premier Threat Detection Keep You Afloat

Are you overwhelmed by security logs and complex threat detection? Watch our on-demand webinar to le...![]() Datasheet

DatasheetCritical Start MDR Services for Operational Technology

Gain 24x7x365 visibility and threat detection across Information Technology (IT) and Operational Tec...

Newsletter Signup

Stay up-to-date on the latest resources and news from CRITICALSTART.

Thanks for signing up!